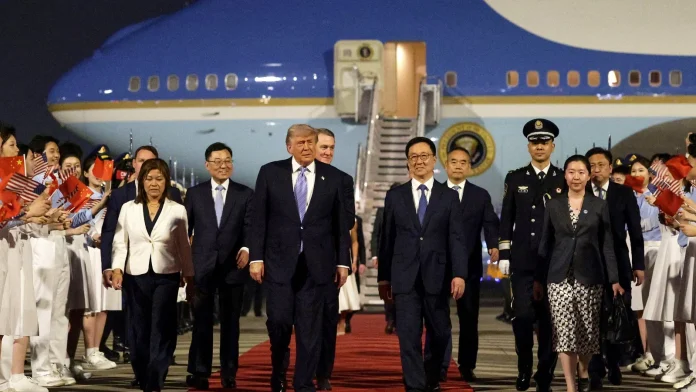

Air Force One, a Red Carpet and the Long Shadow of Two Giants

The engines had hardly cooled when the cameras began to hunt for a narrative. Air Force One rolled onto Beijing’s tarmac like a weather front — loud, inevitable, and carrying with it questions that will not be answered by protocol photos alone.

It was more than a presidential arrival; it was a compact, combustible meeting of two worlds. For nearly a decade no American president had set foot on Chinese soil, and now, beneath the sweep of airport lights and the hum of translator booths, history was being retold in the present tense.

The spectacle and the stakes

There will be the pageantry: a reception at the Great Hall of the People, a walk through the glazed tiles of the Temple of Heaven, a state banquet whose menu and music will be parsed for symbolism. But beneath the silk tablecloths and diplomatic bows is the meat of the matter — trade, technology, Taiwan, and a war that has pushed one of the globe’s most strategic choke points to the front of everyone’s minds.

“We’ll win it one way or the other, peacefully or otherwise,” the president told reporters before boarding, a clipped line that landed like a stone in already churning waters. He followed that with a public insistence — repeated with a casual, almost flinty confidence — that China’s help would not be necessary to end the conflict or to keep the Strait of Hormuz open. That strait, remember, funnels roughly one-fifth of the world’s seaborne oil. When an artery like that flutters, economies and politics shiver.

Business on board: a delegation of CEOs and the AI angle

Air Force One carried, beyond the usual aides and security detail, a high-powered cohort of chief executives: the kinds of people who do not travel light on symbolism nor on baggage claims. Jensen Huang, the CEO of Nvidia, was a last-minute addition — spotted boarding during a refueling stop. Elon Musk was also seen in the service cabin, a reminder that this visit is as much about microchips and machine learning as it is about missiles and maritime law.

“We’re here to solve practical problems,” one CEO traveling with the delegation told me. “This is not a PR tour. We want regulatory certainty, access to markets, and predictable rules of the road.”

Nvidia’s presence is no accident. Advanced chips are the fuel of modern geopolitics — in data centers, defense systems and the artificial intelligence models that are reshaping economies. Washington has tightened controls on some high-end chip equipment and semiconductors; Beijing has answered with demands of its own. On both sides, there is hunger for a deal that loosens chokeholds without surrendering strategic advantage.

What the talks are likely to cover

- Trade: a fragile truce struck last October that paused a cycle of steep tariffs.

- Technology: disputes over export controls on chips and chipmaking tools.

- Security: US arms sales to Taiwan and China’s insistence that such moves are destabilizing.

- Regional conflict: the war with Iran and efforts — halting and fraught — to secure shipping lanes.

Negotiations outside the limelight

While the president prepared for pomp, a different, quieter diplomacy was in motion. Scott Bessent, the administration’s top trade negotiator, concluded hours-long talks with Chinese officials at a reception room in South Korea’s Incheon airport. Officials on both sides called the exchanges candid and constructive. That’s the language of diplomacy; under it are real calculations about supply chains, tariffs, and national resilience.

“We came here to steady a ship that has been listing,” a European trade analyst said. “Neither side wants open conflict, but both have domestic constituencies that demand toughness. That makes any compromise expensive in political terms.”

Taiwan, arms, and the balancing act

Beyond chips and trade lies the thorn that has been present in every US-China encounter for decades: Taiwan. The island’s democratically governed status and its close ties to Western tech supply chains make it a perennial flashpoint. A roughly $14 billion arms package for Taiwan reportedly waits on approval. Beijing views any US arms sale as a direct affront. Washington sees the obligation to help ensure Taiwan can defend itself as a legal and moral commitment.

“Every weapons sale is a test,” said a retired diplomat who has worked on cross-strait issues. “It’s not just about the tanks or the missiles. It’s about signaling. Are you a friend, or a bystander?”

Local color: Beijing’s streets and the undercurrent of expectation

I walked later in the afternoon, when the airport lights had dimmed and the city had reclaimed its rhythm. A tea vendor near a hutong smiled politely at the cameras that had trailed the presidential motorcade earlier. A taxi driver shrugged when asked about the visit: “We get presidents, we get parades. But we also want stability. My cousin exports parts to Europe — if shipping gets disrupted, he worries.”

Traditional motifs — red lacquer, carved dragons, the coiling roofs of centuries-old alleys — held a quiet counterpoint to the modern spectacle. Temples will be toured; fawns-and-phoenixes will be photographed. Yet every incense-scented pause was shadowed by global supply chains and strategic calculations that can’t be soothed by a banquet speech.

Why this matters beyond Beijing and Washington

Ask yourself: who pays when two superpowers posture? Consumers, workers, and farmers do. Supply chains that move everything from smartphones to soybeans can be rerouted only at great cost. Global markets look to Beijing and Washington as twin anchors — when those anchors creak, the rest of the world feels it.

Consider the energy markets. When shipping through the Strait of Hormuz tightens, tanker rates spike and inflationary pressure ripples into grocery bills, commuter costs and national budgets. Consider technology: if export curbs harden, innovation pipelines stall, investments reroute to other hubs, and the next generation of startups may find fewer places to thrive.

Concluding note: the theater and the ledger

Diplomacy is always two things at once: theater and ledger. Tonight, the ceremonial lights will burn bright. Tomorrow, negotiators will return to rooms with closed doors and long agendas. Whether this visit will reshape the ledger — loosening trade snarls, easing the flow of chips, or nudging Tehran toward a settlement — is an open question.

“It’s a reset only if both sides feel they can win something without losing too much,” a Beijing-based geopolitical strategist observed. “If history teaches us anything, it’s that these visits are where signals are sent, not where wars are ended.”

So watch the banquets and the selfies. But also watch the supply chains, the semiconductor orders, and the shipping manifests. Because in the end, the real impact of this meeting will be measured not in toasts, but in whether the world’s traffic — of ideas, goods, and oil — moves a little more freely afterward. What would you want to see come out of these talks? Stability? Clear rules for technology? A slower drumbeat toward conflict? The answers won’t be in the press release; they’ll be in the markets and in the lives of people far from Beijing’s ceremonial lights.